OpenZen 1.0 Release

Going Full Circle for Sensor Data Streaming with OpenZen

Since the foundation of LP-Research, it is not only important for us to provide excellent hardware to our customers but we also want to provide software components which ease the adoption and usage of our products. Over the years, we have provided various libraries to support customers using our sensor hardware on a diverse set of platforms.

As our range of sensor offerings is growing, we realized that we need to consolidate our software library stack while still supporting multiple platforms. We wanted to use this opportunity to create a more modular system to work with sensors with various measurement components.

Figure 1 – OpenZen Unity plugin connected to a LPMS-CU2 sensor and live visualization of sensor orientation.

Based on theses requirements, we developed OpenZen. It is our take on a high performance sensor data streaming and processing library. It combines our experience gained during mopre thant five years of sensor data processing with modern software techniques. The core of OpenZen is developed with the modern C++14 language. We are hosting the source code in an open source repository for seamless public domain access to learn and contribute to the code base.

Core Concept

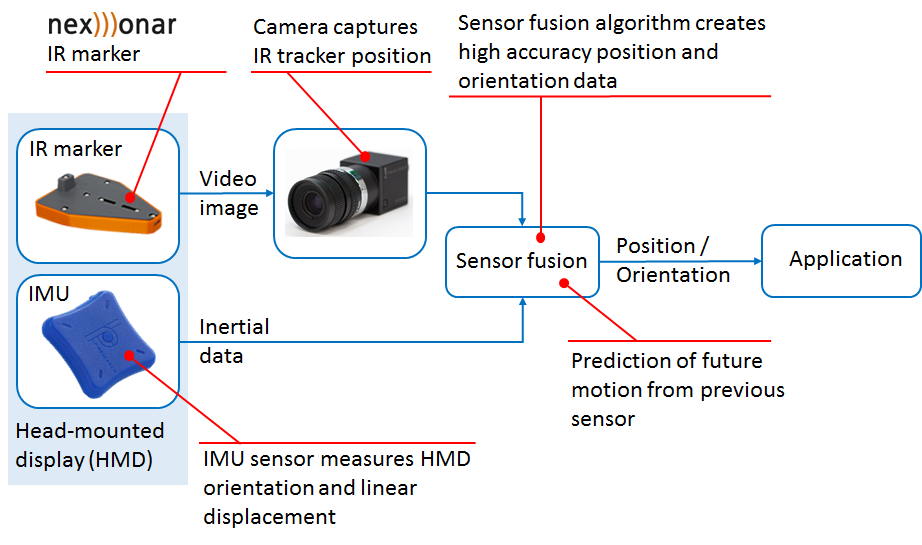

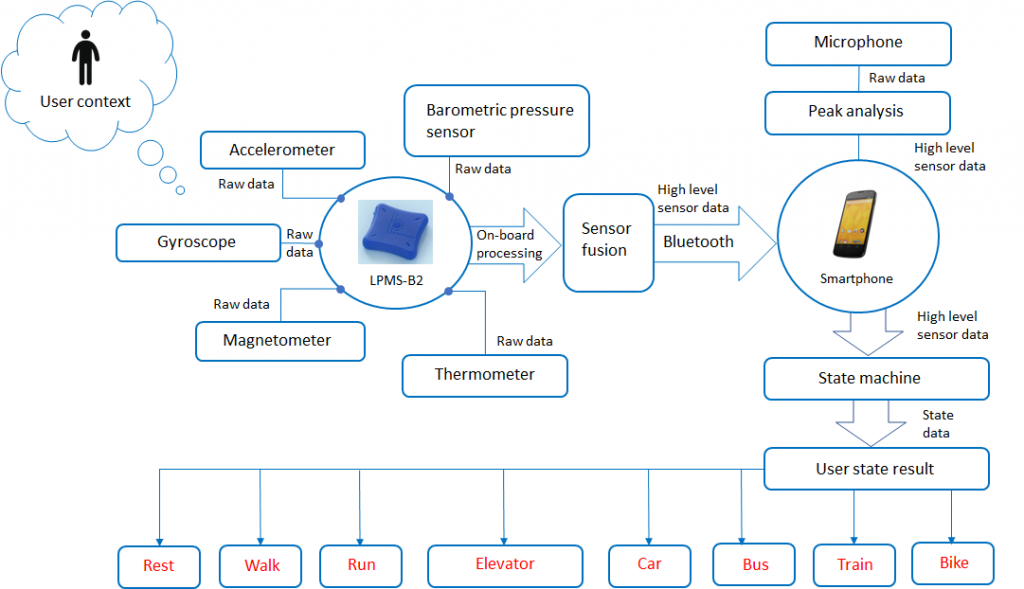

One basic principle of OpenZen is to abstract the sensor components provided by a sensor from the transport layer of the communication. In this way, once the user is familiar with the OpenZen API, a wide range of sensor types via various connection layers can be used. To reach the lowest latency and the highest sensor data throughput, we designed OpenZen to be fully event-based and without any polling loops which could introduce delays.

Sensor Types and Connectivity

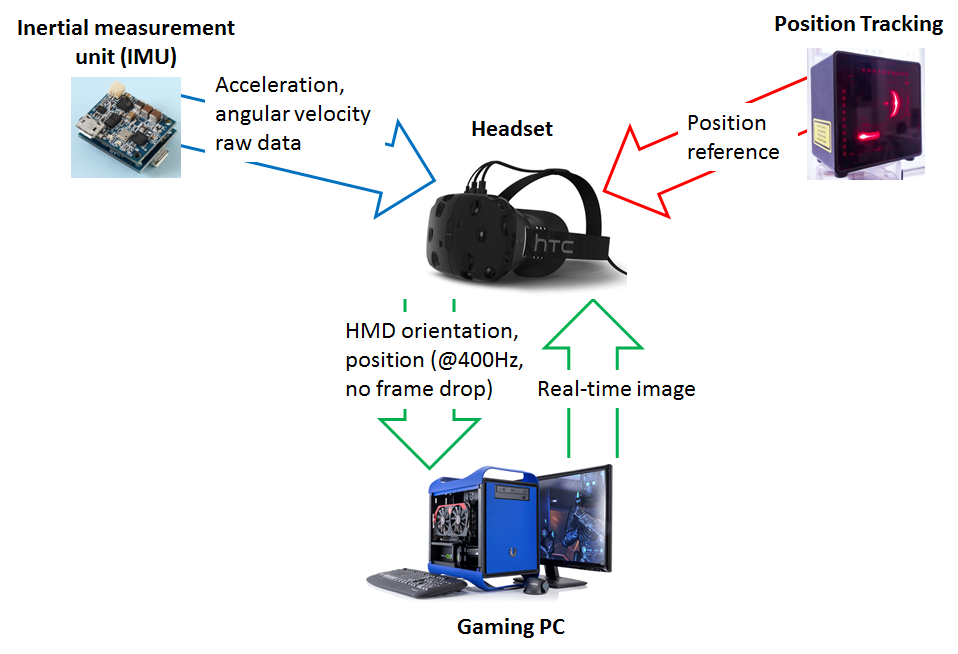

With release 1.0, OpenZen provides a sensor interface for the measurements of inertial-measurement units (IMU) and the output of global navigation satellite systems (GNSS). For example, our new LPMS-IG1P sensor is a combined IMU and GNSS-unit. Both units can be read-out via OpenZen.

A list of supported sensors is here.

OpenZen supports sensor connections via various interfaces like USB, serial port, CAN-Bus and Bluetooth. Furthermore, measurement data from sensors can also be streamed via a network and received on a second system by an OpenZen instance.

A list of supported transport layers is here.

Operating Systems and Programming Languages

Currently, OpenZen can be compiled and used on Windows, Linux and MacOS systems. We are working on ports to more platforms, for example Android. Due to its modular design, the OpenZen API can be accessed from many programming languages. At this time, we support the C, C++ and C# programming languages and we provide a ready-to-go Unity plugin.

OpenZen’s Code Repository

https://bitbucket.org/lpresearch/openzen/

OpenZen’s Documentation

https://lpresearch.bitbucket.io/openzen/

OpenZen’s Release Downloads

https://bitbucket.org/lpresearch/openzen/downloads/

OpenZen’s Unity Plugin